This series of blog posts discusses the creation of a new account type implemented in JavaScript. Over the course of these blogs, I use the development of my

Web Forums extension to explain the necessary actions in creating new account types. I hope to add a new post once every two weeks (I cannot guarantee it, though).

In the previous blog post, I gave a broad overview on the overall structure of the backend interfaces and the components of account implementation. Now, we will prepare the necessary components of getting your extension's folder displayed in the folder pane.

Account implementation decisions

Before you start implementing, you have to decide how to structure the account. The first decision is what the internal account type will be. This

will be the value of nsIMsgAccount::type and will dictate the contract IDs for several interfaces. The next decision is what the account URI scheme is. This will be the scheme for the URI and dictates the contract IDs for a few more interfaces; for mailbox accounts, this scheme will be mailbox. For my extension, I have decided to choose webforum for both of these.

Another important decision to make will be the server for which you will be doing most of your initial tests. It should be something that is manageable for debugging purposes. In my case, I've decided to bestow this honor on the

Kompozer web forum, because it seems lower traffic than any other forum I'm reasonably interested in. As you may notice, I am starting my extension with the intention of focusing on phpBB access—it's sufficiently widely used that I expect that only supporting phpBB at first would still make a worthwhile extension.

Once you have decided that, you should take the time to study how things will be structured: what determines a folder? What determines a message? A thread? Replies? How are you going to be carrying out new actions, such as checking for new messages? What internal information are you going to need to save for accessing? Heck, what determines the "server" to begin with? In my case, the DOM inspector is an invaluable tool for answering this questions. Don't worry about how to figure out the list of possible subscribable folders yet. Subscription will come into play much later; we are going to start by just hardcoding this list somewhere.

In my case, I am choosing to structure the folders as a Category → Forum hierarchy. I'll pick a few of the smaller forums to use so I don't overwhelm debug logs.

Implementing protocol information

Since nsIMsgProtocolInfo is the shortest and simplest of the interfaces, let me start by implementing this one. There are a total of 12 attributes and 1 function on this interface, so the code will not be hard to write. Following is an implementation of the code [1]:

wfService.prototype = {

contractID: ["@mozilla.org/messenger/protocol/info;1?type=webforum"],

QueryInterface: XPCOMUtils.generateQI([Ci.nsIMsgProtocolInfo]),

get defaultLocalPath() {

let dirSvc = Cc["@mozilla.org/file/directory_service;1"]

.getService(Ci.nsIProperties);

let file = dirSvc.get("ProfD", Ci.nsIFile);

file.append("WebForums");

if (!file.exists())

file.create(Ci.nsIFile.DIRECTORY_TYPE, 0775);

return file;

},

get serverIID() { return Ci.nsIMsgIncomingServer; },

get defaultDoBiff() { return true; },

get requiresUsername() { return false; },

getDefaultServerPort: function (secure) { return -1; },

get canDelete() { return true; },

get canLoginAtStartup() { return true; },

get canGetMessages() { return true; },

get canGetIncomingMessages() { return false; },

get showComposeMsgLink() { return false; },

get specialFoldersDeletionAllowed() { return false; }

};

The meaning of each of the attributes can be found in more detail on the MDC page. The properties used by the account wizard mostly control initial preference values; those used by the UI code mostly control which UI elements are enabled. I have excluded from the implementation also those attributes which are unused.

Perhaps the most leeway you have is in implementing defaultLocalPath. In this case, I have adapted the RSS implementation, which does not allow users to change this location. The other implementation (used by IMAP, POP, NNTP, Movemail, and Local Folders) uses a preference to return the default path. An example implementation of this method

is like thus:

get defaultLocalPath() {

this._prefs = Cc["@mozilla.org/preferences-service;1"]

.getService(Ci.nsIPrefService)

.getBranch("extensions.webfora.");

let pref = this._prefs.getComplexValue("rootDir", Ci.nsIRelativeFilePref);

return pref.file;

},

Once you have completed that, you should test that the service implementations work as expected via test snippets in the Error Console. The account manager can be mean when it comes to unusable

account types [2], so this will help fix the most obvious bugs before the account manager attempts to do it for you.

Server and root folder discovery

Before I start going any further with code, let me take a minute to explain how servers and folders interact. The server objects themselves do surprisingly little in the UI; the most common property calls are probably rootFolder and type. This even includes

what you might think of as server attributes: the bold display name, has new messages treeview properties, etc. Instead, those features can be found on the root folder, which is a "fake" folder object. Most of what we care about in this part happens on the root folder instead of the server; however, if you browse the implementation in nsMsgDBFolder, you can see that some of the property calls get forwarded back to the server for root folders.

The backend code will create server objects early on and hold onto them for the duration of the program (or until they are deleted). The server objects then create the root folders which then create subfolders as necessary. Links that go backwards (parent links and server links) are weak references to avoid refcount cycles. Most of this work is hidden in nsMsgDBFolder for you. After creation, various properties are accessed at will; some properties will be loaded in from the database info (a topic for later).

In more concrete code terms, the following is the steps in loading the

folder pane:

- The account manager loads the mail.accountmanager.accounts preference; the values here are a comma-separated list of account keys.

- For each account key, an account is instantiated. Per-account data is read off of the mail.account.<key> preference branch; in specific, the server preference contains the server key to load and the identities preference is a comma-separated list of identity keys.

- The identities and servers are then bootstrapped. In the case of servers, the server is created as an object with the @mozilla.org/messenger/server;1?type=<type>

contract ID. The server pref branch is mail.server.<key>; key preferences here are type, the type for the contract ID; userName, the (optional) username of the server; and hostname, the (required) host of the server.

- The account manager sets the key, type, username, and hostName properties, in that order on the server object instance and then retrieves the port property. The (type, username, hostName, port) tuple is the unique identifier for a server: no two servers can have the same

combination of these values. Now your server is constructed and returned to the folder pane.

- The folder pane retrieves the rootFolder of your server. If you happened to be saved in the expanded state, subFolders is recursively retrieved from folders as corresponding to the saved open state. The folder pane also calls performExpand() on the

server if the root folder is expanded.

So that explains how your server gets created; how do your folders get created? nsMsgIncomingServer::GetRootFolder [3] calls nsMsgIncomingServer::CreateRootFolder, which calls serverURI and uses it to construct an RDF resource. serverURI creates a URI of the form localstoretype://[<username>@]<hostname> by default. This URI is actually the URI of your root folder; other code will assume that this invariant holds true (especially subscribe!). Other folders are created when you get the subFolders property. When the

folder URI is parsed (which is pretty much the first time a useful property is called), getIncomingServerType is called to get the type of the server.

In summary, you may need to implement localStoreType and possible serverURI on your server, and subFolders, and getIncomingServerType, and CreateBaseMessageURI on your folder [4]. First we'll start by getting the root folder display working:

function wfServer() {

JSExtendedUtils.makeCPPInherits(this,

"@mozilla.org/messenger/jsincomingserver;1");

}

wfServer.prototype = {

contractID: ["@mozilla.org/messenger/server;1?type=webforum"],

QueryInterface: JSExtendedUtils.generateQI([]),

get localStoreType() { return "webforum"; }

};

function wfFolder() {

JSExtendedUtils.makeCPPInherits(this,

"@mozilla.org/messenger/jsmsgfolder;1");

}

wfFolder.prototype = {

contractID: "@mozilla.org/rdf/resource-factory;1?name=webforum",

QueryInterface: JSExtendedUtils.generateQI([]),

getIncomingServerType: function () { return "webforum"; }

};

At this point, I recommend you again check to make sure resources are properly registering via the Error Console. With that in hand, it's time to modify your preferences manually. I personally recommend changing settings via editing prefs.js while Thunderbird is off so you

don't accidentally confuse the account manager. I'm using the keys account99 and server99 to make it plain which account is being edited. First, I copy the mail.identity.id3 pref branch (any identity would do) and change the id3 to id99. Then I copy the mail.account.account3 pref branch and change the 3's to 99's.

The next changes are the server preferences, which are going to be the most unique. directorydirectory-rel are set to a folder where I want to store stuff ([ProfD]WebForums/kompozer, in my case). download_on_biff and login_at_startup are set to false (to avoid

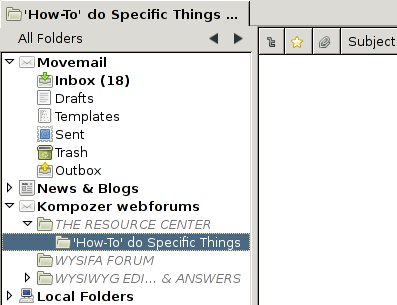

dealing with biff for a bit longer). name is set to be the display name of the server. hostname and userName were set to the appropriate values for this account [5]. To the preference mail.accountmanager.accounts, I appended account99. With those changes done, I then start up Thunderbird to see the outcome:

Perhaps I should have chosen a shorter name for display.

Folder discovery

Now that the root folder is displayed, we need to get the folders added to the display pane.

Somehow, we need to figure out what the folder hierarchy looks like—it has to be stored in some file, in other words. The NNTP code uses the newsrc file to store its folder tree, and local folders looks at the directory hierarchy for its map, to name two examples.

In my code, I'm going to choose the use of a JSON file to store this data. I've considered SQLite, but I don't really need synchronization (per-server files work nicely here), and I'm mostly doing simple lookups. Plus, I can probably handle automatic schema migration more easily in SQLite.

For this next part, we concentrate on a single property: subFolders. This function typically has two parts: it first checks for initialization (if so, it returns the enumerator to the stored values); if it's not initialized, the rest of the function, or perhaps a second function altogether, is used to create the subfolders.

Some code to initialize these subfolders is as follows (the logic to retrieve the database is not included and can instead be found in the source code for my extension):

get subFolders() {

if (this._folders)

return array2enum(this._folders);// If we're here, we need to initialize.

this._inner.QueryInterface(Ci.nsIMsgFolder);

let serverDB = this._inner.server.wrappedJSObject._db;

if (!serverDB.categories)

return array2enum(this._folders = []);

let level = ;

let URI = this._inner.URI + '/';let folders = [];

let RDF = Cc["@mozilla.org/rdf/rdf-service;1"].getService(Ci.nsIRDFService);

let netUtils = Cc["@mozilla.org/network/io-service;1"]

.getService(Ci.nsINetUtil);

for each (let sub in level) {

let folder = RDF.GetResource(URI + netUtils.escapeString(sub.name,

Ci.nsINetUtil.ESCAPE_URL_PATH));

folder.QueryInterface(Ci.nsIMsgFolder);.parent = this;

folders.push(folder);

}

this._folders = folders;

return array2enum(this._folders);}

There are a few major things to note. First, the new folders are created via the RDF resource. Both Thunderbird and SeaMonkey use RDF for folder access, so it is still a good idea to create via the RDF service so you don't confuse the caller code. Also, with that in mind, the subfolder name still needs to be escaped as well in the URI, hence the calls to nsINetUtil. The auxiliary function array2enum takes in a JS array and returns a proper nsISimpleEnumerator for the array. I've excluded it's definition here do to its simplicity and the length of this document; if you want to see it, you can view it from the extension source code. The last thing to note is that this code is using this._inner: this variable is a link to the nsMsgDBFolder implementation which was created for us by the JSExtendedUtils inheritance call. I will defer a more thorough treatment of this C++-JS glue until later.

Folder pane extras

At this point, you should have a simple, plain folder hierarchy, which is navigable if not fully usable. In terms of UI, though, it's not quite fully perfect: if you have an inbox, it will be rather indistinguishable from other folders; similarly, "fake" folders (think the [Gmail] folder if you have Gmail IMAP) show up as regular folders. These things are handled to a large degree by CSS.

A full list of the available of the styling points for the Thunderbird folder pane can be found on MDC. Extensions can also modify the folder pane views or add other, non-folder items. More information can be found at MDC's folder pane information page.

I would provide some example styling code here, but when I was doing testing, I discovered some related assertion failures that I have not yet had time to grok. In the interest of keeping to a posting every two weeks, I am going to defer this until either a mini "part 1.5" or the beginning of part 2, depending on how much time I will have available next week.

Notes

- I will not, in general, post the full code for any of the classes, only enough to demonstrate what needs to be done. For example, the classID property is omitted in this example. Something to note is that I have a modification to XPCOMUtils locally that will accept arrays of contract IDs as opposed to a single one (wfService will be implementing more than one contract ID).

- What it specifically does is attempt to get the server; if it fails, then it removes the account from the accounts pref. If you are compiling your own builds for your extension development profile, I recommend you remove the lines in nsMsgAccountManager::LoadAccount that remove the account on failure.

- In general, I will mix the IDL and C++ names for methods and properties in the course of the guide. As a basic rule of thumb, if you see a :: in the name, it's a C++ name; otherwise, it's the IDL name.

- getBaseMessageURI is a local function called by nsMsgDBFolder during initialization that is used to set up the URIs for getting individual messages. This function will be covered in more depth as we get messages working, but it is technically necessary for startup (a stub that does nothing is provided).

- A strong temptation for accounts whose sources are some web address (for example, RSS or my web forums account) is to put the base address as the hostname property. However, as you would quickly realize, that plays havoc on URI parsing, and nsMsgDBFolder::parseURI is not virtual. A better option would probably be to leave the hostname as some identifier that you use only for guaranteeing uniqueness and to store the base URI somewhere else. Since all of my folders have independent URIs associated with them, I can safely ignore the issue until account creation and subscription are covered.